Author: Snap Analytics

Date Posted: Nov 13, 2023

Last Modified: Nov 22, 2023

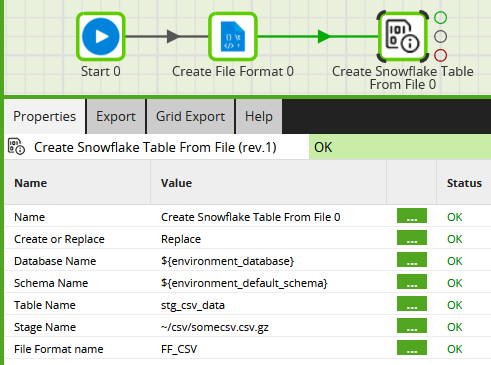

Create Snowflake Table From File

Create a Snowflake table from staged data file(s).

Call this shared job by Snap Analytics from a Matillion ETL orchestration job to create a permanent, relational table with columns inferred from the file(s) found in a named internal or external stage, and parsed by a named file format.

The shared job can parse CSV, JSON, AVRO, ORC, PARQUET and XML files.

Parameters

| Parameter | Description |

|---|---|

| Create or Replace | Enter “Replace” to use CREATE OR REPLACE TABLE. Any other values will use CREATE TABLE |

| Database Name | Database Name, or ${environment_database} to use the environment default |

| Schema Name | Schema Name, or ${environment_default_schema} to use the environment default |

| Table Name | Name of table to be created |

| Stage Name | Stage Name, with optional path. Do not include the @ character |

| File Format name | Name of the File Format to use |

Prerequisites

The file(s) to be analyzed must already exist in the Snowflake Stage. It is best to run this on one file at a time unless you are confident the schema is identical in all the files.

The file format must already exist. You can use a Create File Format component to (re-)create the file format in Matillion ETL prior to use, as shown in the screenshot above.

Downloads

Licensed under: Matillion Free Subscription License

- Download METL-aws-sf-1.61.6-create-snowflake-table-from-file.melt

- Target: Snowflake

- Version: 1.61.6 or higher