Author: Ian

Date Posted: Jan 29, 2024

Last Modified: Feb 3, 2024

Full Load Data Replication Strategy in a Medallion data architecture

This article describes the technicalities of the full load data replication strategy in a Medallion data architecture.

Understanding Full Load Data Replication

Full load data replication is a simple strategy which involves copying the entire dataset from a source to a target system. This strategy is particularly useful in the following cases:

- To ensure consistency and integrity while avoiding complexity (note this is sometimes at the expense of worse performance)

- When you need to synchronize entire datasets

- After the source data has undergone significant structure changes (i.e. schema drift has occurred)

- If records are often deleted from the source system

This strategy can be used for source tables either with or without a primary key.

Full load replication does place load on the source system, as queries are executed against it to fetch the data. The load on the source system is heavier than with an incremental data replication strategy

Full Load Replication Data Architecture

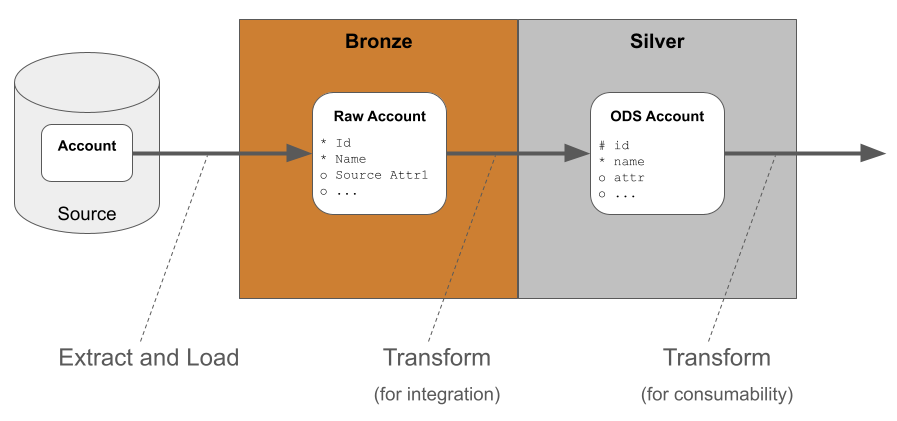

In full load data replication, data is first extracted from source and loaded into the bronze area of the target database. At this point the data is still in its original, raw, system-specific format.

After the extract and load, the data is transformed into its generic, system-neutral format in the silver layer. The transformations can be simple if the raw data is already similar to the desired target. Data transformations are likely to include datatype changes and naming standards.

Updates to the silver layer table can be made in two different ways:

- As a truncate-and-insert, in which the data in the target table is entirely replaced. This approach is simple and fast but can cause problems with data integrity after deletes (more on this subject shortly)

- As a MERGE operation, with either inserts or updates depending on a primary key. The main requirement is that the source table must have a unique identifier. To make the pipelines operationally robust it is important to include deduplication logic during this stage of data transformation

Note the naming alterations in the silver layer table, and the addition of a primary key constraint. For more information on the notation please refer to this article on Data Modeling Techniques.

Storing data in the silver layer does not necessarily make it any easier to read. So a second layer of data transformation - from silver to gold - is often used to render the data in a more consumable way. The output is usually a star schema, or completely denormalized (“flat”) tables - sometimes known as One Big Table or “OBT”’s.

From a DataOps perspective it is helpful to perform this second layer of transformation using database views.

Advantages of using a silver layer ODS table

I’ll take the example of an “Account” table. Using the ODS table as an intermediate has several advantages:

- It is a generic “Account” table rather than being source-system-specific. This enables you to much more easily integrate a second source of account data in future

- Using it to create convenient star schema objects as views means never having to manually refresh the star schema. This big DataOps advantage also means the logic is independent of the extract-and-load method. You could switch to an incremental data replication strategy at any time without any further refactoring

- Whenever the source schema does change (i.e. schema drift occurs) then you can deal with the changes in one place: the Bronze to Silver data transformations. This is easier than having to refactor multiple star schema objects - another DataOps advantage

In other words, separate stages of data transformation are used for integration and for consumability. Distinguishing between these two aspects is one of the hallmarks of a robust, production-grade data pipeline architecture.

Full vs Incremental loading

Full load data replication has the advantage of simplicity. Every load guarantees complete synchronization. The approach is more suitable for smaller datasets. It can be relatively resource-intensive for large datasets, with longer processing times.

In contrast, an incremental load strategy - characterized by tracking changes and filtering the extraction - is faster and more efficient, especially for larger datasets. Less data transfer means reduced resource utilization, and consequently faster processing times. Whether implemented logically (using a high water mark) or physically (using database change logs or change data capture), incremental loading increases the complexity of implementation.

Hard vs Soft deletes

The difference between hard and soft deletes lies in how records are handled when they are marked for removal. The difference can have an important impact on data replication.

- A hard delete is simple: the record is permanently removed from the database. This results in the immediate and irreversible elimination of the data

- In contrast, in a soft delete, instead of physically erasing a record, it is flagged to indicate that it is no longer active or valid. The metadata is captured in a status field

Hard deletes ensure cleaner and more efficient storage. They may be required for operational reasons, for example data privacy laws. Hard deletes result in a loss of historical context, and can pose challenges if audit trails or historical analysis are needed. A soft delete allows both the retention of historical information and ongoing referential integrity. But consumers of the data are expected to use additional logic to filter out deleted records during queries. One well known example of soft deletes occurs in a slowly changing dimension.

Hard deletes are handled by full load data replication provided you use the truncate-and-insert option to update the silver layer table. The DataOps risk to be aware of is that you may end up with records in other silver layer tables that reference deleted records.